It is the most performant approach for incrementally loading new files. You can copy new files only, where files or folders has already been time partitioned with timeslice information as part of the file or folder name (for example, /yyyy/mm/dd/file.csv). Loading new files only by using time partitioned folder or file name. Incrementally copy new and changed files based on LastModifiedDate from Azure Blob storage to Azure Blob storage.For example, if the unit is MONTH and the slicelength is 2, then each slice is 2 months wide. how many units of time are contained in the slice). This indicates the width of the slice (i.e. The expression must be of type DATE or TIMESTAMPNTZ. Please be aware that if you let ADF scan huge amounts of files but you only copy a few files to the destination, this will still take a long time because of the file scanning process. The function returns the start or end of the slice that contains this date or time. ADF will scan all the files from the source store, apply the file filter by their LastModifiedDate, and only copy the new and updated file since last time to the destination store. You can copy the new and changed files only by using LastModifiedDate to the destination store. Loading new and changed files only by using LastModifiedDate Incrementally copy data from Azure SQL Database to Azure Blob storage by using Change Tracking technology.The workflow for this approach is depicted in the following diagram:įor step-by-step instructions, see the following tutorial: It enables an application to easily identify data that was inserted, updated, or deleted. Incrementally copy data from multiple tables in a SQL Server instance to Azure SQL Databaseĭelta data loading from SQL DB by using the Change Tracking technologyĬhange Tracking technology is a lightweight solution in SQL Server and Azure SQL Database that provides an efficient change tracking mechanism for applications.Incrementally copy data from one table in Azure SQL Database to Azure Blob storage.

The workflow for this approach is depicted in the following diagram:įor step-by-step instructions, see the following tutorials: The delta loading solution loads the changed data between an old watermark and a new watermark.

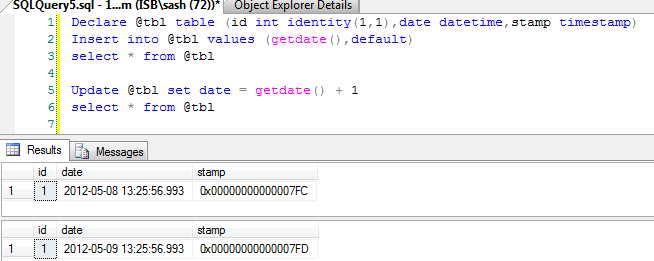

A watermark is a column that has the last updated time stamp or an incrementing key. In this case, you define a watermark in your source database. Delta data loading from database by using a watermark The tutorials in this section show you different ways of loading data incrementally by using Azure Data Factory. In a data integration solution, incrementally (or delta) loading data after an initial full data load is a widely used scenario. Microsoft Fabric covers everything from data movement to data science, real-time analytics, business intelligence, and reporting. Try out Data Factory in Microsoft Fabric, an all-in-one analytics solution for enterprises.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed